AI Video Models in 2025: Which Ones Actually Deliver

Vaibhav Sisinty tested over 10 AI video models in 2025. Kling O1 wins for character consistency, Runway Gen-4.5 for physics and creative control, WAN 2.5 for audio sync, and Sora 2 for long-form video despite a disappointing launch.

Vaibhav Sisinty tested over 10 AI video generation models throughout 2025 and ranked them across different use cases. His newsletter breaks down which tools excel at character consistency, physics simulation, audio-visual sync, creative control, and production-ready output.

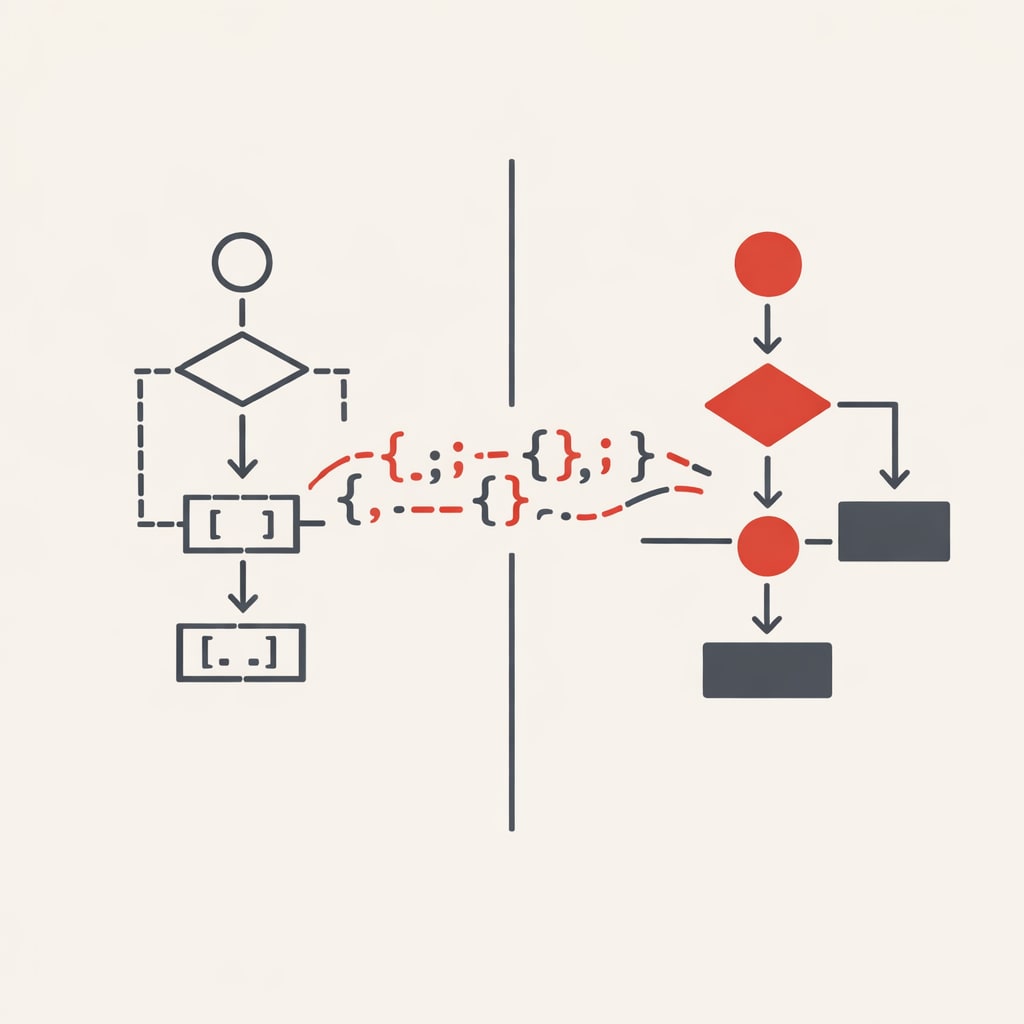

Video generation is harder than text or images. Text models have had years to mature. Video models are roughly two years old. Despite that, 2025 brought substantial improvements across the board.

Character consistency

Kling O1, launched December 2025, takes the lead here. It's the first unified multimodal video model with what the company calls "director-like memory" - the ability to maintain character appearance and behaviour across multiple generations.

Sisinty notes it edges out Veo 3.1 for character work, though the gap isn't massive. Combined with Kling's other features from their launch week, it's become his default choice for video generation.

The caveat: if you're already using Kling 2.5, O1 isn't a major leap forward. It's an incremental improvement, not a breakthrough.

Physics and realism

Runway Gen-4.5 dominates the benchmarks. It scored 1,247 Elo points and ranks first on Artificial Analysis's text-to-video leaderboard.

The physics understanding is particularly strong. Objects move with believable force. Liquids behave realistically. Fabrics and hair stay consistent even during complex motion. The References tool helps maintain world consistency across generations.

The downside: cost. Maximum quality on the Standard plan runs £0.08 per image. The model also has limitations with causal reasoning (effects sometimes appear before causes) and object permanence. It also shows success bias, where difficult actions succeed more often than they should.

Audio-visual synchronisation

Alibaba's WAN 2.5, launched September 2025, solved a problem that plagued earlier video models: mismatched audio.

Previous AI videos had characters whose mouths didn't align with speech. Background music fell out of sync. WAN 2.5 addresses this through joint multimodal training.

The model generates three audio elements simultaneously: ambient sound, background music, and voice narration that matches on-screen action. You can specify emotions, tone, rhythm, pacing, and volume. The system delivers natural speech from whispers to shouts.

Creative control

Runway Gen-4.5 wins again here, primarily through its References tool. This enables character and scene consistency without complex workflows.

Act-One (October 2024) and Act-Two (2025) let you animate characters without motion-capture equipment. Just a webcam and your performance.

Everything Everywhere All at Once used Runway for VFX. The Late Show with Stephen Colbert uses it for editing. But there's a learning curve. The tool works best if you have some VFX or video production knowledge.

Long-form video

Sora 2 extended duration limits in October 2025. All users get 15 seconds. Pro subscribers (£200/month) get 25 seconds. Previously, the cap was 10 seconds.

The Storyboards feature, launched in December, lets you plan videos second by second, frame by frame. Pro users can build complete narrative arcs without constant jump-cutting.

Disney invested $1 billion in OpenAI in December 2025, allowing users to generate over 200 Disney, Marvel, Pixar, and Star Wars characters on Sora 2.

The model still makes mistakes. OpenAI continues to deal with copyright issues.

Overall winner

Kling wins on what matters for most creators: accessible, fast, handles complex human movement better than alternatives.

Body tracking, facial consistency, maintaining character integrity through action sequences - it's very good at most things. A proper all-rounder.

Sisinty expects 2026 will bring fewer breakthrough models and more optimisation and incremental improvements. Right now, the tools work well enough for entertainment - virtual influencers, meme creators, YouTube shorts. But seamless integration into production workflows remains janky, with poor UX and high costs.