Prompt Engineering for Claude: A Practical Guide to Better AI Outputs

Anthropic's documentation on prompt engineering for Claude covers techniques from basic clarity to advanced methods like chain-of-thought reasoning, role prompting, and prompt chaining - with practical examples showing the impact of each approach.

Anthropic has a comprehensive set of documentation on how to get better results from Claude through prompt engineering. Their guidance covers everything from basic clarity to advanced techniques like chain-of-thought reasoning and prompt chaining.

The documentation assumes you've already defined success criteria and built empirical tests for your use case. Without those foundations, prompt engineering becomes guesswork. Once you have clear metrics, though, these techniques can dramatically improve Claude's performance - often faster and cheaper than alternatives like fine-tuning.

Start with clarity

Anthropic's first recommendation: treat Claude like a brilliant but completely new employee. The model has no context about your norms, style guidelines, or preferred ways of working. The more precisely you explain what you want, the better the output.

Their documentation includes a practical test: show your prompt to a colleague with minimal context on the task. If they're confused, Claude will be too.

Specific guidance includes giving Claude contextual information (what the results will be used for, who the audience is, where this task fits in a larger workflow), being explicit about what you want (if you want only code and nothing else, say so), and providing instructions as numbered lists or bullet points to ensure Claude follows your exact sequence.

The examples show the difference. For anonymising customer feedback, a vague prompt like "remove all personally identifiable information" produced inconsistent results - Claude left customer names in the output. A detailed prompt with six numbered steps specifying exactly how to handle names, emails, phone numbers, and product mentions produced clean, consistent anonymisation.

Use examples to guide behaviour

Anthropic recommends including 3-5 diverse, relevant examples in your prompts. This technique, called multishot prompting, works particularly well for tasks requiring structured outputs or adherence to specific formats.

The examples should mirror your actual use case, cover edge cases and potential challenges, and be wrapped in XML tags for structure. For multiple examples, nest them within an outer tag.

Their customer feedback analysis example demonstrates the impact. Without examples, Claude provided lengthy explanations and inconsistent categorisation - sometimes listing multiple categories, sometimes just one. With a single well-structured example showing the exact format (Category, Sentiment, Priority), Claude produced consistent, properly formatted output across all feedback items.

Let Claude think through complex problems

For tasks requiring research, analysis, or problem-solving, Anthropic recommends chain-of-thought prompting - giving Claude space to think step-by-step before providing an answer.

The documentation presents three approaches, ordered from least to most complex. The basic approach simply adds "Think step-by-step" to your prompt. The guided approach outlines specific steps for Claude to follow. The structured approach uses XML tags like <thinking> and <answer> to separate reasoning from the final answer.

Their financial advisor example shows the difference. Without step-by-step thinking, Claude recommended a bond investment with surface-level reasoning about certainty and risk tolerance. With structured thinking, Claude calculated exact figures for both scenarios, considered historical market volatility, analysed the client's specific risk tolerance given their house-buying goal, and provided a much more thorough justification for the recommendation.

The trade-off: increased output length may impact latency. Use chain-of-thought judiciously for tasks that genuinely require deep analysis.

Structure with XML tags

When prompts involve multiple components - context, instructions, examples - XML tags help Claude parse them accurately. Anthropic recommends tags like <instructions>, <example>, and <formatting> to clearly separate different parts.

The benefits: clarity in separating prompt components, reduced errors from Claude misinterpreting sections, flexibility to modify parts without rewriting everything, and easier extraction of specific parts from Claude's output.

Their legal contract analysis example shows the impact. Without XML tags, Claude provided a superficial analysis and generated a report that didn't match the required structure. With tags wrapping the contract, instructions, and formatting example, Claude produced a properly structured report with the correct tone, identified all critical issues with specific risk assessments, and provided actionable recommendations with proposed contract language.

Best practices: use consistent tag names throughout your prompts, nest tags for hierarchical content, and combine XML tags with other techniques like multishot prompting or chain-of-thought.

Give Claude a role

Using the system parameter to give Claude a role can dramatically improve performance. This technique, called role prompting, works best with specific, detailed role descriptions.

The documentation's legal contract example demonstrates the impact. Without a role, Claude provided a superficial analysis: "The indemnification and liability clauses are typical, and we maintain our IP rights." With the role of General Counsel at a Fortune 500 tech company, Claude identified critical issues that could cost millions - overly broad indemnification that could make the company liable for the vendor's negligence, a liability cap of $500 that's "grossly inadequate" for a data infrastructure failure, and IP ownership terms that would give the vendor access to proprietary algorithms.

Anthropic recommends using the system parameter for the role, then putting task-specific instructions in the user turn. Experiment with different role formulations - a "data scientist" might see different insights than a "data scientist specialising in customer insight analysis for Fortune 500 companies."

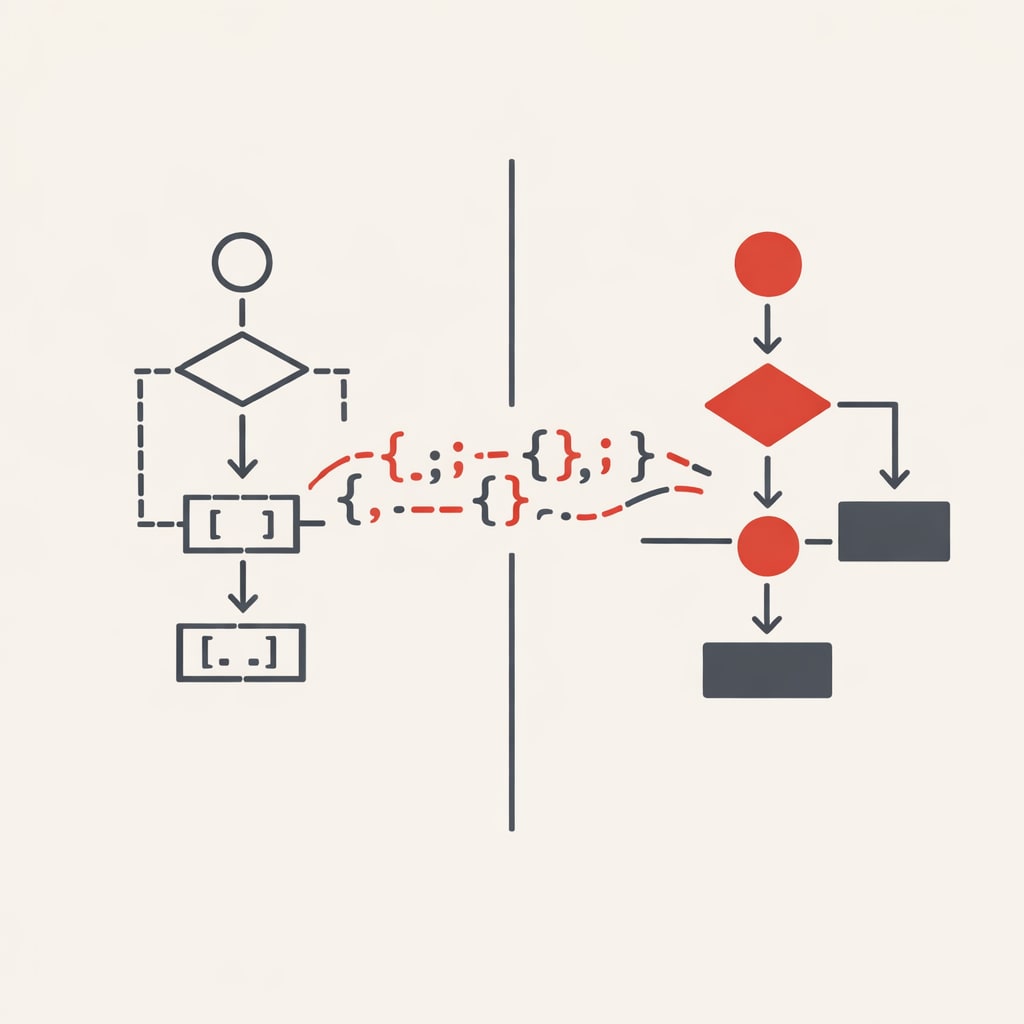

Chain prompts for complex tasks

When tasks have multiple distinct steps requiring in-depth thought, Anthropic recommends breaking them into smaller subtasks - each getting Claude's full attention.

The benefits: improved accuracy because each subtask gets focused attention, clearer outputs because simpler subtasks mean clearer instructions, and easier debugging because you can pinpoint and fix specific steps without redoing everything.

Their legal contract analysis example shows the difference. A single prompt asking Claude to review a SaaS contract and draft an email missed critical details - the email lacked proposed changes and the analysis was superficial. Breaking it into three prompts (analyse risks, draft email based on analysis, review email for tone and professionalism) produced comprehensive risk analysis with impact assessment, a detailed email with specific proposed changes for each issue, and professional review ensuring the right balance of assertiveness and collaboration.

The documentation recommends using XML tags to pass outputs between prompts, ensuring each subtask has a single clear objective, and refining based on performance. For tasks with independent subtasks, run prompts in parallel for speed.

Advanced users can create self-correction chains where Claude reviews its own work. Their medical research summary example shows a three-prompt chain: summarise the paper, review the summary for accuracy and completeness, then improve the summary based on feedback. This approach catches errors and refines outputs for high-stakes tasks.

Extended thinking for difficult problems

Anthropic's extended thinking feature allows Claude to work through complex problems step-by-step before responding. The documentation recommends starting with general instructions rather than step-by-step prescriptive guidance - Claude's creativity in approaching problems often exceeds human ability to prescribe the optimal process.

Instead of detailed steps, try: "Please think about this problem thoroughly and in great detail. Consider multiple approaches and show your complete reasoning. Try different methods if your first approach doesn't work."

Extended thinking works well with multishot prompting. You can include examples using XML tags like <thinking> to show canonical patterns, though the documentation notes Claude may perform better with free rein to think as it deems best.

The documentation recommends starting with a small thinking budget (minimum 1024 tokens) and increasing as needed. For workloads requiring more than 32K tokens of thinking, use batch processing to avoid networking issues and timeouts.

Supporting tools

Anthropic provides several tools to support prompt engineering. The prompt generator helps solve the "blank page problem" by creating initial templates following best practices. The prompt improver takes existing templates and enhances them through automated analysis, adding detailed chain-of-thought instructions and standardized formatting.

Both tools work with prompt templates and variables - separating fixed content (static instructions that remain constant) from variable content (dynamic elements like user inputs or retrieved data). This separation supports consistency, efficiency, testability, scalability, and version control.

The Console's evaluation tool allows you to test, scale, and track versions of your prompts by keeping the variable and fixed portions separate. This makes it easier to identify what's working and what needs refinement.

Practical implementation

The documentation emphasizes that not every task requires every technique. For simple factual queries, just answer directly - no tools needed. For constraint optimisation problems or tasks requiring structured thinking frameworks, extended thinking with detailed instructions produces better results. For research synthesis or document analysis, prompt chaining prevents Claude from dropping steps.

The guidance throughout focuses on empirical testing. Start with clear success criteria, build tests, try techniques in order from most broadly effective to most specialised, and measure results. Prompt engineering works best as an iterative process grounded in actual performance data rather than assumptions about what should work.