A Weekly Ritual for Building AI Product Sense

Dr. Marily Nika shares a 15-minute weekly practice for building AI product sense: three deliberate tests that reveal how models behave, where they fail, and how to design products that work despite those limitations.

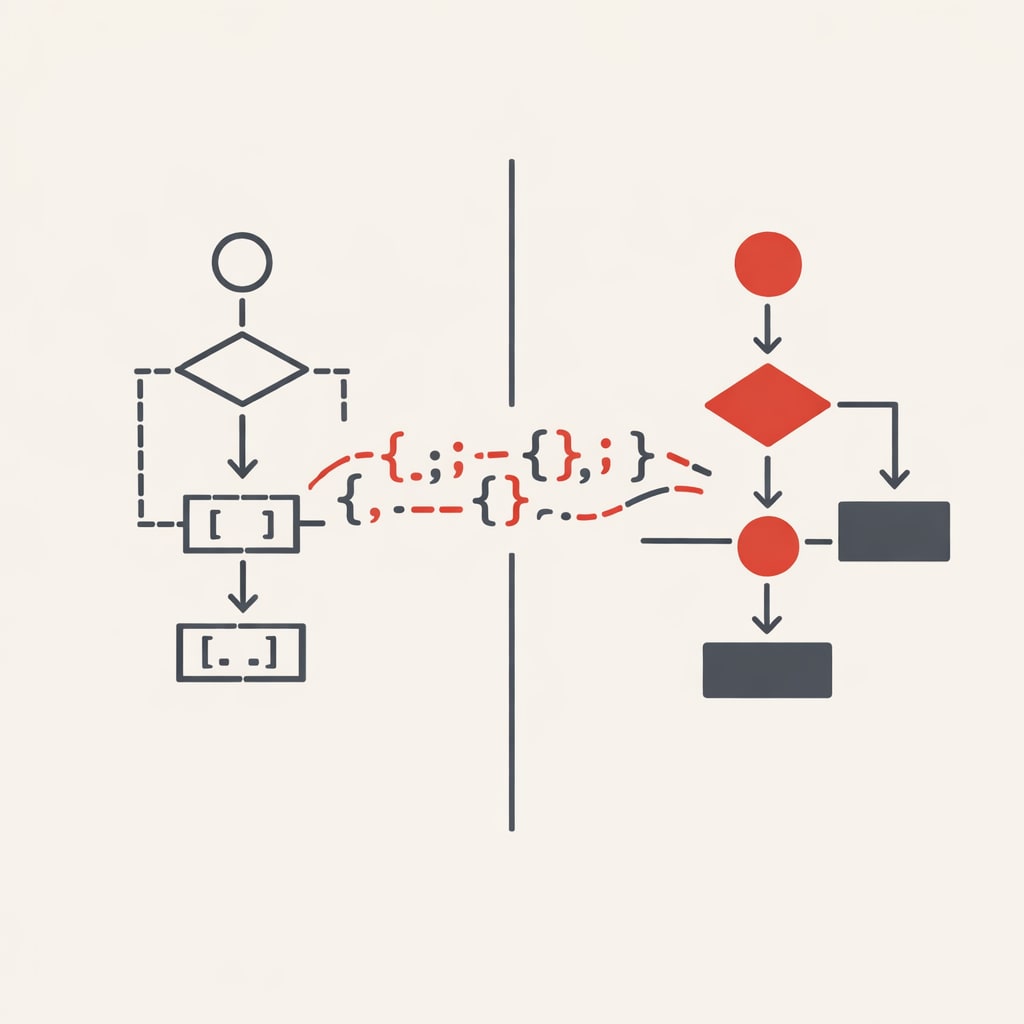

Dr. Marily Nika, who spent a decade as an AI PM at Google and Meta, has developed a simple weekly practice for building what she calls "AI product sense"-the ability to understand what AI models can do, where they fail, and how to design products that work despite those limitations. It consists of three deliberate tests that force you to see how a model behaves before your users do.

Ask the model to do something obviously wrong

Take a messy Slack thread - the kind full of half-thoughts, jokes, and tangents and ask the model to extract "strategic product decisions" from it. Generative models have a dangerous pattern: when confronted with chaos, they confidently invent structure.

Nika gives an example thread where people are discussing bugs, dark mode, and random UI concerns. When she asked the model to extract strategic decisions, it hallucinated a roadmap, assigned owners, and turned offhand comments into commitments.

The fix is to re-run the same thread with one additional instruction: "Only include items explicitly mentioned. If something is missing, say 'Not enough information.'" The model then acknowledges uncertainty, asks clarifying questions, and surfaces themes without inventing facts.

Comparing these two outputs - confident hallucination versus humble clarity teaches you what the model needs to behave reliably. You're looking for what changed, what guardrail fixed the problem, and whether the "good" version feels shippable.

Ask the model to do something ambiguous

Ambiguity breaks probabilistic systems because models fill gaps with their best guess. Nika suggests uploading a PRD to NotebookLM and asking it to "summarize this for the VP of Product."

Does it over-summarise? Latch onto irrelevant details? Assume the wrong audience? These failures reveal the model's semantic fragility - where it technically understands your words but completely misses your intent.

The product should step in where the model gets confused. That might mean asking the user to choose a goal, giving the model more context, or constraining the action so it can't go off-track.

Ask the model to do something unexpectedly difficult

Pick a task that feels simple to a human PM but stresses the model's reasoning or judgement. Nika suggests: "Group these 40 bugs into themes and propose a roadmap" or "Summarise this PRD and flag risks for leadership."

You're trying to see where it breaks first. That's exactly where you need to design guardrails, narrow inputs, or split the task into smaller steps.

Define minimum viable quality

Performance almost always drops when AI features leave the controlled development environment. Nika recommends defining three thresholds:

- Acceptable bar: Good enough for real users

- Delight bar: Where the feature feels magical

- Do-not-ship bar: Unacceptable failure rates that break trust

From her work on speech recognition and speaker identification, Nika learned that models can hit 90% accuracy in controlled tests and fall apart in a real home with a barking dog or running dishwasher.

For speaker identification, the delight bar isn't a perfect percentage - it's behavioural signals like users stopping to repeat themselves or "No, I meant..." corrections dropping sharply. If eight or nine out of ten attempts work without a retry, it feels magical. If one in five needs a retry, trust erodes.

Estimate the cost envelope

One common mistake is falling in love with a magical demo without checking if it's financially viable. Nika recommends estimating the cost envelope early - the rough range of what a feature will cost to run at scale.

For AI meeting notes, she calculates: roughly £0.02 per 30-minute transcript, 20 meetings per user per month equals £0.40 per user monthly. Heavy users doing 100 meetings might cost £2.00. With caching and a smaller model for low-stakes meetings, that might drop to £0.25-£0.30 per user.

A feature costing £0.30 per user monthly that drives retention is straightforward. A feature at £5 per user with unclear impact is a business problem.

Design guardrails where behaviour breaks

Guardrails determine what the product should do when the model hits its limits. At a startup Nika worked with, they built a feature to summarise Slack threads into decisions and action items. It worked well until it started assigning owners when no one had agreed to anything.

The fix was a single rule in the system prompt: "Only assign an owner if someone explicitly volunteers or is directly asked and confirms. Otherwise, surface themes and ask the user what to do next."

That one constraint eliminated the biggest trust issue almost immediately.