Code That Reviews Itself Before You See It

Kieran Klaassen opened GitHub to review code and found Claude Code had already applied three months of feedback. This is compounding engineering - building systems where each iteration teaches the next one.

Kieran Klaassen, an engineer at Every, described in his recent blog post opening GitHub expecting his usual code review routine. Instead, he found that Claude Code, the AI that writes and edits in his terminal, had already applied feedback from three months of prior reviews. It had learned his preferences and fixed issues before he could flag them.

The AI's comments referenced specific pull requests: "Changed variable naming to match pattern from PR #234, removed excessive test coverage per feedback on PR #219, added error handling similar to approved approach in PR #241."

This is what Klaassen calls "compounding engineering" - building development systems where each iteration teaches the next one. Every pull request becomes a lesson. Every bug fix prevents its entire category going forward. The system doesn't just help today; it gets faster tomorrow.

Teaching the system to teach itself

Compounding engineering requires upfront investment. You teach your tools before they can teach themselves.

When building a frustration detector for Cora, Every's AI email assistant, Klaassen started with a sample conversation showing user frustration. He gave Claude a simple prompt: "This conversation shows frustration. Write a test that checks if our tool catches it."

Claude wrote the test. It failed - the natural first step in test-driven development. Then Claude wrote the detection logic. Still imperfect. But here's where it gets interesting: Klaassen had Claude iterate on the frustration detection prompt until the test passed.

Claude adjusted the prompt, ran the test, read the logs, and adjusted again. After several rounds, the test passed. But AI outputs aren't deterministic, so Klaassen had Claude run the test 10 times. When it only identified frustration in four out of 10 passes, Claude analysed the failures and discovered it was missing hedged language like "Hmm, not quite" that signals frustration when paired with repeated requests.

Claude updated the prompt to look for this pattern. Next iteration: nine out of 10 passes. Good enough to ship.

The entire workflow - from identifying patterns to iterating prompts to validation - went into CLAUDE.md, the file Claude pulls for context. Next time they need to detect user emotion, they don't start from scratch. The system already knows what to do.

Permanent fixes from temporary failures

At Cora, this approach has transformed how the team works. Production errors now trigger AI agents that investigate crashes, reproduce problems from logs, and generate both the solution and tests to prevent recurrence. Every failure becomes a one-time event.

Design discussions get recorded and documented by Claude, capturing why certain approaches were chosen. New team members inherit these standards on day one.

Review agents with different expertise - a "Rails expert reviewer" for framework best practices, a "performance reviewer" for speed optimization - work in parallel. What used to be a back-and-forth process taking hours now happens simultaneously.

The results: features that took over a week now ship in 1-3 days. Pull request reviews that dragged on for days finish in hours. Bugs caught before production have increased substantially.

The five-step approach

Klaassen's workflow comes down to five steps:

Teach through work. Every decision gets captured in CLAUDE.md - why you prefer guard clauses over nested ifs, how you name things. The llms.txt file stores architectural decisions that don't change when you restructure features. These files turn preferences into permanent system knowledge.

Turn failures into upgrades. When something breaks, add the test, update the rule, write the evaluation. A user reported they never received their daily email Brief. The team wrote tests to catch similar delivery lapses, updated monitoring rules, and built evaluations that continuously verify the pipeline. The system now watches for this category of problem permanently.

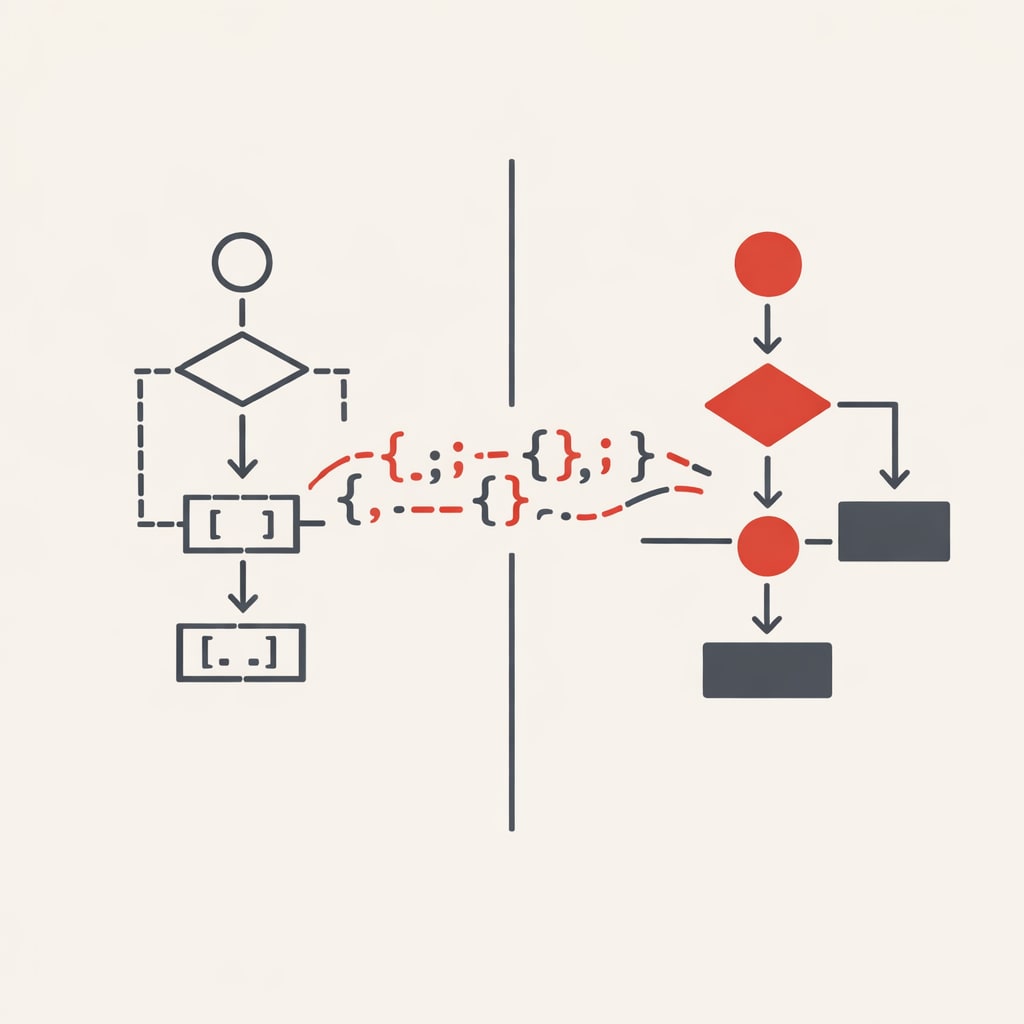

Orchestrate in parallel. Klaassen's monitor looks like mission control. Left lane: a Claude instance reads issues and writes implementation plans. Middle lane: another Claude takes those plans and writes code. Right lane: a third Claude reviews against CLAUDE.md and catches issues. Unlike hiring engineers at £120,000 each, AI workers scale on demand.

Keep context lean but yours. Don't copy generic CLAUDE.md files from the internet. Your context should reflect your codebase and your patterns. Ten specific rules you follow beat 100 generic ones. When rules stop serving you, delete them.

Trust the process, verify output. Don't micromanage every line. Trust the system you've built, but verify through tests and spot checks. When something comes back wrong, teach the system why. Next time, it won't be.

Klaassen's approach shifts what it means to be an engineer. The job isn't typing code anymore - it's designing the systems that design the systems. It's the only approach where today's work makes tomorrow's work exponentially easier, and where every improvement is permanent.