The problem with “transparent” image generation

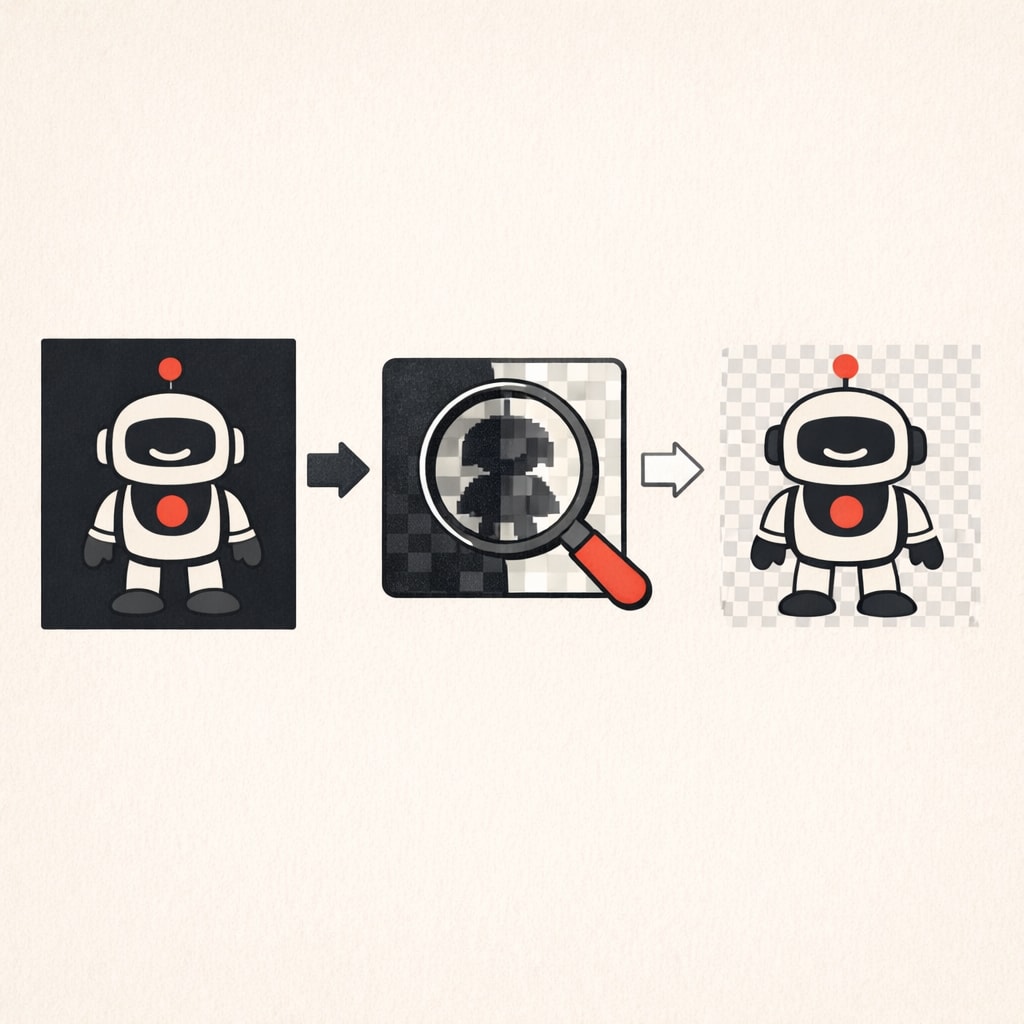

Julien De Luca's method for generating transparent backgrounds uses difference matting between black and white background versions. Two passes, clean results, no manual masking required.

Julien De Luca wrote about generating transparent background images from models that don't natively support alpha channels. His method uses difference matting with Nano Banana Pro 2, but the technique works with any image generation model.

The problem is straightforward: you want a subject with a transparent background, but the model only outputs RGB images. Traditional approaches like green screen keying fail because AI-generated images often have colour spill and inconsistent lighting that makes clean extraction impossible.

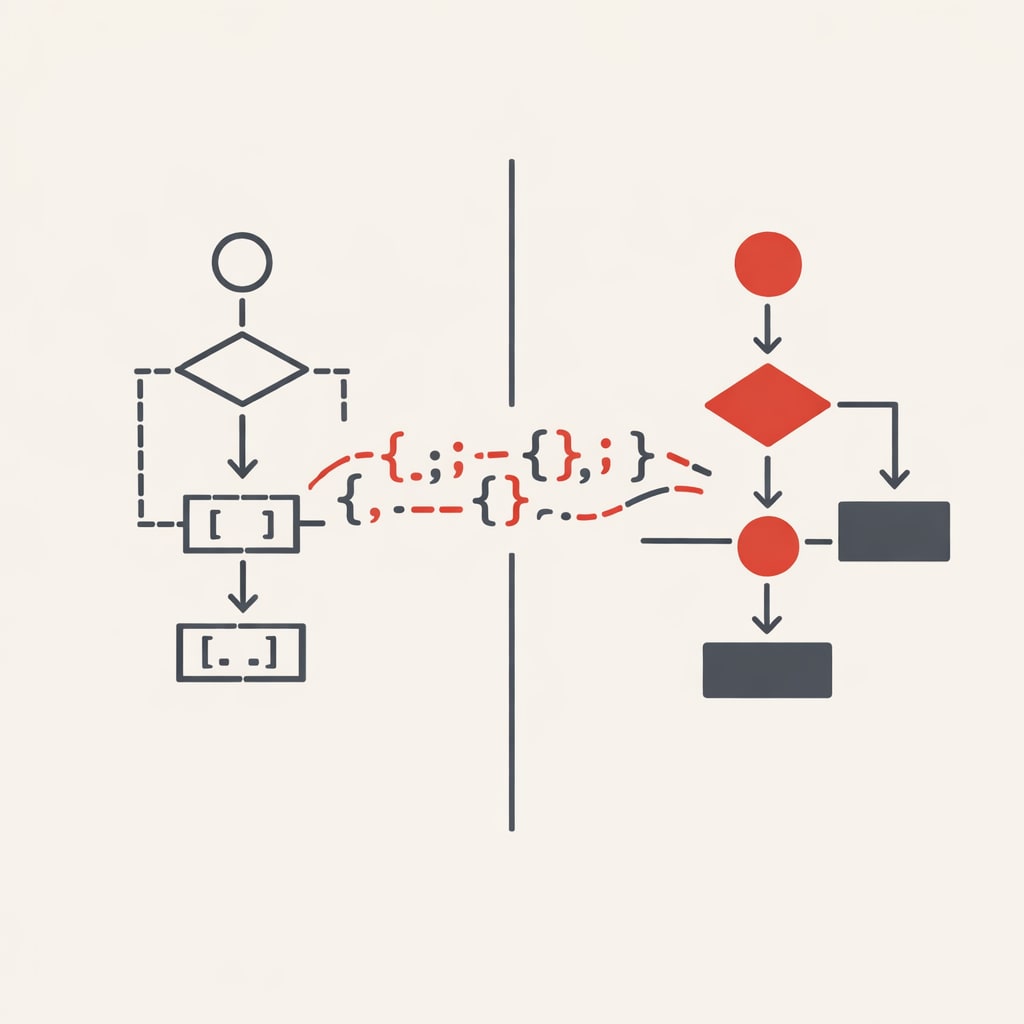

De Luca's solution generates two images with identical prompts but different backgrounds. The first uses a pure black background (#000000), the second uses pure white (#FFFFFF). By comparing the pixel values between these two images, you can calculate which parts are the subject and which parts are background.

The maths is simple. For each pixel, you measure how much it changes between the black and white versions. Pixels that don't change much are part of the subject. Pixels that change dramatically are background. This difference becomes your alpha channel.

The formula looks like this: for each colour channel, you calculate (white_pixel - black_pixel) / 255. This gives you the transparency value. Then you extract the subject colour by taking the black background pixel and dividing by (1 - alpha).

In practice, you need to handle edge cases. Pure black or pure white in the subject can create division by zero errors. De Luca adds small epsilon values to prevent this. You also need to clamp the final RGB values to valid ranges.

The technique requires two generation passes, which doubles the cost and time. But it produces clean transparency without manual masking or post-processing. The quality depends on the model generating consistent subjects between the two passes, which modern models handle well with fixed seeds.

De Luca provides Python code that implements the full pipeline. It handles the image generation, difference calculation, and final composite. The code is compact enough to adapt for other models or integrate into existing workflows.