Thread-Based Engineering: A Framework for Measuring AI-Assisted Development

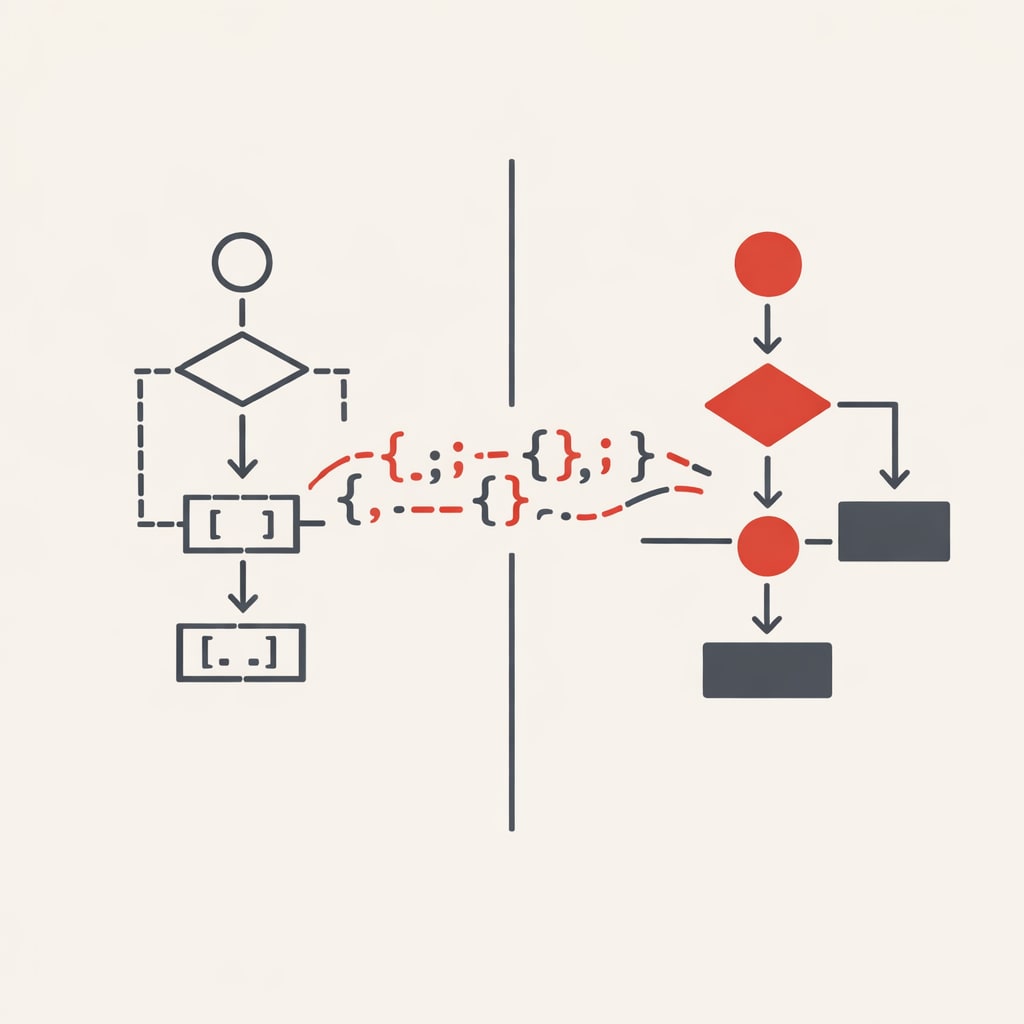

Miguel Miranda Dias wrote about Thread-Based Engineering, a framework for measuring and improving how engineers work with AI coding agents. It breaks work into six thread patterns, from basic single-prompt sessions to parallel execution and nested agent orchestration.

Miguel Miranda Dias wrote about a mental framework for understanding and improving how engineers work with AI coding agents. He calls it "Thread-Based Engineering" - a way to visualise and measure productivity gains that often feel vague or unmeasurable.

The framework came from a simple question: how do you know you're actually getting better at AI-assisted coding, rather than just feeling busier? Even Andrej Karpathy recently admitted feeling behind, sensing he could be "10x more powerful" if he properly used what's available. If someone of his calibre feels the pressure, the rest of us need a clear model.

A thread is a unit of engineering work. It has three parts: your prompt, the agent's execution through tool calls, and your review of the output. Previously, you made every API call and wrote every line. Now the agent handles the middle work. Your role shifts to orchestration and validation.

The six thread patterns

Dias identifies six thread types, from basic to advanced:

Base Thread: One prompt, one agent session, one review. Everyone starts here. The fundamental unit.

He recommends creating an alias to launch agents faster:

alias cldyo="claude --dangerously-skip-permissions --model opus"

P-Thread (Parallel): Multiple threads running simultaneously with the same prompt. Boris Cherny, creator of Claude Code, runs five Claude instances in parallel, numbering his terminal tabs 1-5. This works well for code reviews, test generation, or exploring different implementation approaches.

You can use the fork-terminal skill to spawn parallel agents with one command.

fork terminal: cli, review code in src/api/* and write findings to temp/auth-review.mdC-Thread (Chained): Work broken into distinct phases with human checkpoints between each.

Phase 1 → Review → Phase 2 → Review → Phase 3 → ReviewUse this when work doesn't fit in one context window, when failure risk is high, or when doing something irreversible like database migrations. Effective but expensive in human energy.

L-Thread (Long Duration): High autonomy, extended duration, minimal human intervention. These can run for hours or days, executing thousands of tool calls. They require Stop Hooks - validation that intercepts when the agent declares it's done, runs tests and checks, and forces it to continue if anything fails.

This is where the Ralph Wiggum Pattern works: put a coding agent in a loop until the task passes validation:

while ! do cat PROMPT.md | claude-code ; done

The agent keeps failing and retrying until it passes your checks. No babysitting required. But L-Threads need trust - in your test suite, validation hooks, and the agent itself. Build that trust incrementally.

B-Thread (Big/Nested): One thread spawns sub-threads. From your perspective, you send one prompt and do one review. Inside that, an Orchestrator Agent delegates to specialised sub-agents: a Planning Agent, Builder Agent, Testing Agent, Review Agent. Each runs its own thread. You're creating thicker threads by nesting agentic work.

F-Thread (Fusion): Send the same prompt to multiple agents from different providers, review all results, then fuse the best pieces into one solution. Maybe Claude handles the audio processing pipeline better but Gemini's noise filtering is cleaner. You cherry-pick and synthesise.

fork three terminals: claude code, codex, and gemini - each should prototype the wake-word detection module for our voice assistant and save to temp/<agent>-wake-word/The key step most engineers miss is synthesis. After agents finish, you aggregate, choose the best, or pick parts from each. By taking multiple shots, you increase confidence in the output. You're using compute to buy quality.

Dias mentions a seventh type - the Z-Thread (Zero Touch) - where agents ship to production autonomously. This requires comprehensive test suites, type checking, linting, integration tests, staging deployments, and automated rollback. We're not there yet for most workflows, but every improvement in validation brings it closer.

Four levers for improvement

You're improving if you're pulling these levers:

- More Threads: Use P-Threads to parallelise execution

- Longer Threads: Use L-Threads with validation hooks to extend duration before review

- Thicker Threads: Use B-Threads to nest agents within agents

- Fewer Checkpoints: Increase trust to remove human-in-the-loop steps

If you're running more threads, longer threads, thicker threads, and reviewing less often because your validation is solid, you're measurably better than before.

Start with one workflow you run regularly. Identify what thread type it is - probably a base thread. Then ask if it could be a P-Thread. Could you run three instances in parallel and pick the best result? Start there and work up the stack.